Proper study guides for Down to date Databricks Databricks Certified Data Engineer Associate Exam certified begins with Databricks Databricks-Certified-Data-Engineer-Associate preparation products which designed to deliver the Free Databricks-Certified-Data-Engineer-Associate questions by making you pass the Databricks-Certified-Data-Engineer-Associate test at your first time. Try the free Databricks-Certified-Data-Engineer-Associate demo right now.

Online Databricks-Certified-Data-Engineer-Associate free questions and answers of New Version:

NEW QUESTION 1

A data engineer has a Job that has a complex run schedule, and they want to transfer that schedule to other Jobs.

Rather than manually selecting each value in the scheduling form in Databricks, which of the following tools can the data engineer use to represent and submit the schedule programmatically?

- A. pyspark.sql.types.DateType

- B. datetime

- C. pyspark.sql.types.TimestampType

- D. Cron syntax

- E. There is no way to represent and submit this information programmatically

Answer: D

NEW QUESTION 2

A data engineer is attempting to drop a Spark SQL table my_table and runs the following command:

DROP TABLE IF EXISTS my_table;

After running this command, the engineer notices that the data files and metadata files have been deleted from the file system.

Which of the following describes why all of these files were deleted?

- A. The table was managed

- B. The table's data was smaller than 10 GB

- C. The table's data was larger than 10 GB

- D. The table was external

- E. The table did not have a location

Answer: A

Explanation:

managed tables files and metadata are managed by metastore and will be deleted when the table is dropped . while external tables the metadata is stored in a external location. hence when a external table is dropped you clear off only the metadata and the files (data) remain.

NEW QUESTION 3

A new data engineering team has been assigned to work on a project. The team will need access to database customers in order to see what tables already exist. The team has its own group team.

Which of the following commands can be used to grant the necessary permission on the entire database to the new team?

- A. GRANT VIEW ON CATALOG customers TO team;

- B. GRANT CREATE ON DATABASE customers TO team;

- C. GRANT USAGE ON CATALOG team TO customers;

- D. GRANT CREATE ON DATABASE team TO customers;

- E. GRANT USAGE ON DATABASE customers TO team;

Answer: E

Explanation:

The GRANT statement is used to grant privileges on a database, table, or view to a user or role. The ALL PRIVILEGES option grants all possible privileges on the specified object, such as CREATE, SELECT, MODIFY, and USAGE. The syntax of the GRANT statement is:

GRANT privilege_type ON object TO user_or_role;

Therefore, to grant full permissions on the database customers to the new data engineering team, the command should be:

GRANT ALL PRIVILEGES ON DATABASE customers TO team;

NEW QUESTION 4

A data engineer needs to use a Delta table as part of a data pipeline, but they do not know if they have the appropriate permissions.

In which of the following locations can the data engineer review their permissions on the table?

- A. Databricks Filesystem

- B. Jobs

- C. Dashboards

- D. Repos

- E. Data Explorer

Answer: E

NEW QUESTION 5

Which of the following statements regarding the relationship between Silver tables and Bronze tables is always true?

- A. Silver tables contain a less refined, less clean view of data than Bronze data.

- B. Silver tables contain aggregates while Bronze data is unaggregated.

- C. Silver tables contain more data than Bronze tables.

- D. Silver tables contain a more refined and cleaner view of data than Bronze tables.

- E. Silver tables contain less data than Bronze tables.

Answer: D

Explanation:

https://www.databricks.com/glossary/medallion-architecture

NEW QUESTION 6

A data engineer is maintaining a data pipeline. Upon data ingestion, the data engineer notices that the source data is starting to have a lower level of quality. The data engineer would like to automate the process of monitoring the quality level.

Which of the following tools can the data engineer use to solve this problem?

- A. Unity Catalog

- B. Data Explorer

- C. Delta Lake

- D. Delta Live Tables

- E. Auto Loader

Answer: D

Explanation:

https://docs.databricks.com/delta-live-tables/expectations.html

Delta Live Tables is a tool provided by Databricks that can help data engineers automate the monitoring of data quality. It is designed for managing data pipelines, monitoring data quality, and automating workflows. With Delta Live Tables, you can set up data quality checks and alerts to detect issues and anomalies in your data as it is ingested and processed in real-time. It provides a way to ensure that the data quality meets your desired standards and can trigger actions or notifications when issues are detected. While the other tools mentioned may have their own purposes in a data engineeringenvironment, Delta Live Tables is specifically designed for data quality monitoring and automation within the Databricks ecosystem.

NEW QUESTION 7

An engineering manager uses a Databricks SQL query to monitor ingestion latency for each data source. The manager checks the results of the query every day, but they are manually rerunning the query each day and waiting for the results.

Which of the following approaches can the manager use to ensure the results of the query are updated each day?

- A. They can schedule the query to refresh every 1 day from the SQL endpoint's page in Databricks SQL.

- B. They can schedule the query to refresh every 12 hours from the SQL endpoint's page in Databricks SQL.

- C. They can schedule the query to refresh every 1 day from the query's page in Databricks SQL.

- D. They can schedule the query to run every 1 day from the Jobs UI.

- E. They can schedule the query to run every 12 hours from the Jobs UI.

Answer: C

NEW QUESTION 8

A data engineer has realized that they made a mistake when making a daily update to a table. They need to use Delta time travel to restore the table to a version that is 3 days old. However, when the data engineer attempts to time travel to the older version, they are unable to restore the data because the data files have been deleted.

Which of the following explains why the data files are no longer present?

- A. The VACUUM command was run on the table

- B. The TIME TRAVEL command was run on the table

- C. The DELETE HISTORY command was run on the table

- D. The OPTIMIZE command was nun on the table

- E. The HISTORY command was run on the table

Answer: A

Explanation:

The VACUUM command in Delta Lake is used to clean up and remove unnecessary data files that are no longer needed for time travel or query purposes. When you run VACUUMwith certain retention settings, it can delete older data files, which might include versions of data that are older than the specified retention period. If the data engineer is unable to restore the table to a version that is 3 days old because the data files have been deleted, it's likely because the VACUUM command was run on the table, removing the older data files as part of data cleanup.

NEW QUESTION 9

A data engineer wants to schedule their Databricks SQL dashboard to refresh once per day, but they only want the associated SQL endpoint to be running when it is necessary.

Which of the following approaches can the data engineer use to minimize the total running time of the SQL endpoint used in the refresh schedule of their dashboard?

- A. They can ensure the dashboard’s SQL endpoint matches each of the queries’ SQL endpoints.

- B. They can set up the dashboard’s SQL endpoint to be serverless.

- C. They can turn on the Auto Stop feature for the SQL endpoint.

- D. They can reduce the cluster size of the SQL endpoint.

- E. They can ensure the dashboard’s SQL endpoint is not one of the included query’s SQL endpoint.

Answer: C

NEW QUESTION 10

Which of the following commands can be used to write data into a Delta table while avoiding the writing of duplicate records?

- A. DROP

- B. IGNORE

- C. MERGE

- D. APPEND

- E. INSERT

Answer: C

Explanation:

To write data into a Delta table while avoiding the writing of duplicate records, you can use the MERGE command. The MERGE command in Delta Lake allows you to combine the ability to insert new records and update existing records in a single atomic operation. The MERGE command compares the data being written with the existing data in the Delta table based on specified matching criteria, typically using a primary key or unique identifier. It then performs conditional actions, such as inserting new records or updating existing records, depending on the comparison results. By using the MERGE command, you can handle the prevention of duplicate records in a more controlled and efficient manner. It allows you to synchronize and reconcile data from different sources while avoiding duplication and ensuring data integrity.

NEW QUESTION 11

A data engineer has been given a new record of data:

id STRING = 'a1'

rank INTEGER = 6 rating FLOAT = 9.4

Which of the following SQL commands can be used to append the new record to an existing Delta table my_table?

- A. INSERT INTO my_table VALUES ('a1', 6, 9.4)

- B. my_table UNION VALUES ('a1', 6, 9.4)

- C. INSERT VALUES ( 'a1' , 6, 9.4) INTO my_table

- D. UPDATE my_table VALUES ('a1', 6, 9.4)

- E. UPDATE VALUES ('a1', 6, 9.4) my_table

Answer: A

NEW QUESTION 12

A data engineer has a Python notebook in Databricks, but they need to use SQL to accomplish a specific task within a cell. They still want all of the other cells to use Python without making any changes to those cells.

Which of the following describes how the data engineer can use SQL within a cell of their Python notebook?

- A. It is not possible to use SQL in a Python notebook

- B. They can attach the cell to a SQL endpoint rather than a Databricks cluster

- C. They can simply write SQL syntax in the cell

- D. They can add %sql to the first line of the cell

- E. They can change the default language of the notebook to SQL

Answer: D

NEW QUESTION 13

A data engineer and data analyst are working together on a data pipeline. The data engineer is working on the raw, bronze, and silver layers of the pipeline using Python, and the data analyst is working on the gold layer of the pipeline using SQL. The raw source of the pipeline is a streaming input. They now want to migrate their pipeline to use Delta Live Tables.

Which of the following changes will need to be made to the pipeline when migrating to Delta Live Tables?

- A. None of these changes will need to be made

- B. The pipeline will need to stop using the medallion-based multi-hop architecture

- C. The pipeline will need to be written entirely in SQL

- D. The pipeline will need to use a batch source in place of a streaming source

- E. The pipeline will need to be written entirely in Python

Answer: A

NEW QUESTION 14

Which of the following approaches should be used to send the Databricks Job owner an email in the case that the Job fails?

- A. Manually programming in an alert system in each cell of the Notebook

- B. Setting up an Alert in the Job page

- C. Setting up an Alert in the Notebook

- D. There is no way to notify the Job owner in the case of Job failure

- E. MLflow Model Registry Webhooks

Answer: B

Explanation:

https://docs.databricks.com/en/workflows/jobs/job-notifications.html

NEW QUESTION 15

A data organization leader is upset about the data analysis team’s reports being different from the data engineering team’s reports. The leader believes the siloed nature of their organization’s data engineering and data analysis architectures is to blame.

Which of the following describes how a data lakehouse could alleviate this issue?

- A. Both teams would autoscale their work as data size evolves

- B. Both teams would use the same source of truth for their work

- C. Both teams would reorganize to report to the same department

- D. Both teams would be able to collaborate on projects in real-time

- E. Both teams would respond more quickly to ad-hoc requests

Answer: B

Explanation:

A data lakehouse is designed to unify the data engineering and data analysis architectures by integrating features of both data lakes and data warehouses. One of the key benefits of a data lakehouse is that it provides a common, centralized data repository (the "lake") that serves as a single source of truth for data storage and analysis. This allows both data engineering and data analysis teams to work with the same consistent data sets, reducing discrepancies and ensuring that the reports generated by both teams are based on the same underlying data.

NEW QUESTION 16

Which of the following describes the relationship between Gold tables and Silver tables?

- A. Gold tables are more likely to contain aggregations than Silver tables.

- B. Gold tables are more likely to contain valuable data than Silver tables.

- C. Gold tables are more likely to contain a less refined view of data than Silver tables.

- D. Gold tables are more likely to contain more data than Silver tables.

- E. Gold tables are more likely to contain truthful data than Silver tables.

Answer: A

Explanation:

In some data processing pipelines, especially those following a typical "Bronze-Silver-Gold" data lakehouse architecture, Silver tables are often considered a more refined version of the raw or Bronze data. Silver tables may include data cleansing, schema enforcement, and some initial transformations. Gold tables, on the other hand, typically represent a stage where data is further enriched, aggregated, and processed to provide valuable insights for analytical purposes. This could indeed involve more aggregations compared to Silver tables.

NEW QUESTION 17

Which of the following code blocks will remove the rows where the value in column age is greater than 25 from the existing Delta table my_table and save the updated table?

- A. SELECT * FROM my_table WHERE age > 25;

- B. UPDATE my_table WHERE age > 25;

- C. DELETE FROM my_table WHERE age > 25;

- D. UPDATE my_table WHERE age <= 25;

- E. DELETE FROM my_table WHERE age <= 25;

Answer: C

NEW QUESTION 18

A data engineer runs a statement every day to copy the previous day’s sales into the table transactions. Each day’s sales are in their own file in the location "/transactions/raw".

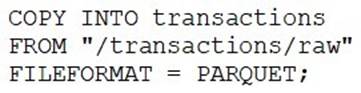

Today, the data engineer runs the following command to complete this task:

After running the command today, the data engineer notices that the number of records in table transactions has not changed.

Which of the following describes why the statement might not have copied any new records into the table?

- A. The format of the files to be copied were not included with the FORMAT_OPTIONS keyword.

- B. The names of the files to be copied were not included with the FILES keyword.

- C. The previous day’s file has already been copied into the table.

- D. The PARQUET file format does not support COPY INTO.

- E. The COPY INTO statement requires the table to be refreshed to view the copied rows.

Answer: C

Explanation:

https://docs.databricks.com/en/ingestion/copy-into/index.html The COPY

INTO SQL command lets you load data from a file location into a Delta table. This is a re- triable and idempotent operation; files in the source location that have already been loaded are skipped. if there are no new records, the only consistent choice is C no new files were loaded because already loaded files were skipped.

NEW QUESTION 19

Which of the following commands will return the location of database customer360?

- A. DESCRIBE LOCATION customer360;

- B. DROP DATABASE customer360;

- C. DESCRIBE DATABASE customer360;

- D. ALTER DATABASE customer360 SET DBPROPERTIES ('location' = '/user'};

- E. USE DATABASE customer360;

Answer: C

Explanation:

To retrieve the location of a database named "customer360" in a database management system like Hive or Databricks, you can use the DESCRIBE DATABASE command followed by the database name. This command will provide information about the database, including its location.

NEW QUESTION 20

......

Thanks for reading the newest Databricks-Certified-Data-Engineer-Associate exam dumps! We recommend you to try the PREMIUM Certshared Databricks-Certified-Data-Engineer-Associate dumps in VCE and PDF here: https://www.certshared.com/exam/Databricks-Certified-Data-Engineer-Associate/ (87 Q&As Dumps)