We provide real Professional-Data-Engineer exam questions and answers braindumps in two formats. Download PDF & Practice Tests. Pass Google Professional-Data-Engineer Exam quickly & easily. The Professional-Data-Engineer PDF type is available for reading and printing. You can print more and practice many times. With the help of our Google Professional-Data-Engineer dumps pdf and vce product and material, you can easily pass the Professional-Data-Engineer exam.

Google Professional-Data-Engineer Free Dumps Questions Online, Read and Test Now.

NEW QUESTION 1

You are deploying 10,000 new Internet of Things devices to collect temperature data in your warehouses globally. You need to process, store and analyze these very large datasets in real time. What should you do?

- A. Send the data to Google Cloud Datastore and then export to BigQuery.

- B. Send the data to Google Cloud Pub/Sub, stream Cloud Pub/Sub to Google Cloud Dataflow, and store the data in Google BigQuery.

- C. Send the data to Cloud Storage and then spin up an Apache Hadoop cluster as needed in Google Cloud Dataproc whenever analysis is required.

- D. Export logs in batch to Google Cloud Storage and then spin up a Google Cloud SQL instance, import the data from Cloud Storage, and run an analysis as needed.

Answer: B

NEW QUESTION 2

Your company is migrating its on-premises data warehousing solution to BigQuery. The existing data warehouse uses trigger-based change data capture (CDC) to apply daily updates from transactional database sources Your company wants to use BigQuery to improve its handling of CDC and to optimize the performance of the data warehouse Source system changes must be available for query m near-real time using tog-based CDC streams You need to ensure that changes in the BigQuery reporting table are available with minimal latency and reduced overhead. What should you do? Choose 2 answers

- A. Perform a DML INSERT UPDATE, or DELETE to replicate each CDC record in the reporting table m real time.

- B. Periodically DELETE outdated records from the reporting tablePeriodically use a DML MERGE to simultaneously perform DML INSER

- C. UPDATE, and DELETE operations in the reporting table

- D. Insert each new CDC record and corresponding operation type into a staging table in real time

- E. Insert each new CDC record and corresponding operation type into the reporting table in real time and use a materialized view to expose only the current version of each unique record.

Answer: BD

NEW QUESTION 3

Which of the following statements about Legacy SQL and Standard SQL is not true?

- A. Standard SQL is the preferred query language for BigQuery.

- B. If you write a query in Legacy SQL, it might generate an error if you try to run it with Standard SQL.

- C. One difference between the two query languages is how you specify fully-qualified table names (i.

- D. table names that include their associated project name).

- E. You need to set a query language for each dataset and the default is Standard SQL.

Answer: D

Explanation:

You do not set a query language for each dataset. It is set each time you run a query and the default query language is Legacy SQL.

Standard SQL has been the preferred query language since BigQuery 2.0 was released.

In legacy SQL, to query a table with a project-qualified name, you use a colon, :, as a separator. In standard SQL, you use a period, ., instead.

Due to the differences in syntax between the two query languages (such as with project-qualified table names), if you write a query in Legacy SQL, it might generate an error if you try to run it with Standard SQL.

Reference:

https://cloud.google.com/bigquery/docs/reference/standard-sql/migrating-from-legacy-sql

NEW QUESTION 4

In order to securely transfer web traffic data from your computer's web browser to the Cloud Dataproc cluster you should use a(n) .

- A. VPN connection

- B. Special browser

- C. SSH tunnel

- D. FTP connection

Answer: C

Explanation:

To connect to the web interfaces, it is recommended to use an SSH tunnel to create a secure connection to the master node.

Reference:

https://cloud.google.com/dataproc/docs/concepts/cluster-web-interfaces#connecting_to_the_web_interfaces

NEW QUESTION 5

Your analytics team wants to build a simple statistical model to determine which customers are most likely to work with your company again, based on a few different metrics. They want to run the model on Apache Spark, using data housed in Google Cloud Storage, and you have recommended using Google Cloud Dataproc to execute this job. Testing has shown that this workload can run in approximately 30 minutes on a 15-node cluster, outputting the results into Google BigQuery. The plan is to run this workload weekly. How should you optimize the cluster for cost?

- A. Migrate the workload to Google Cloud Dataflow

- B. Use pre-emptible virtual machines (VMs) for the cluster

- C. Use a higher-memory node so that the job runs faster

- D. Use SSDs on the worker nodes so that the job can run faster

Answer: A

NEW QUESTION 6

Your weather app queries a database every 15 minutes to get the current temperature. The frontend is powered by Google App Engine and server millions of users. How should you design the frontend to respond to a database failure?

- A. Issue a command to restart the database servers.

- B. Retry the query with exponential backoff, up to a cap of 15 minutes.

- C. Retry the query every second until it comes back online to minimize staleness of data.

- D. Reduce the query frequency to once every hour until the database comes back online.

Answer: B

Explanation:

https://cloud.google.com/sql/docs/mysql/manage-connections#backoff

NEW QUESTION 7

Your team is working on a binary classification problem. You have trained a support vector machine (SVM) classifier with default parameters, and received an area under the Curve (AUC) of 0.87 on the validation set. You want to increase the AUC of the model. What should you do?

- A. Perform hyperparameter tuning

- B. Train a classifier with deep neural networks, because neural networks would always beat SVMs

- C. Deploy the model and measure the real-world AUC; it’s always higher because of generalization

- D. Scale predictions you get out of the model (tune a scaling factor as a hyperparameter) in order to get the highest AUC

Answer: A

Explanation:

https://towardsdatascience.com/understanding-hyperparameters-and-its-optimisation-techniques-f0debba07568

NEW QUESTION 8

If you're running a performance test that depends upon Cloud Bigtable, all the choices except one below are recommended steps. Which is NOT a recommended step to follow?

- A. Do not use a production instance.

- B. Run your test for at least 10 minutes.

- C. Before you test, run a heavy pre-test for several minutes.

- D. Use at least 300 GB of data.

Answer: A

Explanation:

If you're running a performance test that depends upon Cloud Bigtable, be sure to follow these steps as you plan and execute your test:

Use a production instance. A development instance will not give you an accurate sense of how a production instance performs under load.

Use at least 300 GB of data. Cloud Bigtable performs best with 1 TB or more of data. However, 300 GB of data is enough to provide reasonable results in a performance test on a 3-node cluster. On larger clusters, use 100 GB of data per node.

Before you test, run a heavy pre-test for several minutes. This step gives Cloud Bigtable a chance to balance data across your nodes based on the access patterns it observes.

Run your test for at least 10 minutes. This step lets Cloud Bigtable further optimize your data, and it helps ensure that you will test reads from disk as well as cached reads from memory.

Reference: https://cloud.google.com/bigtable/docs/performance

NEW QUESTION 9

Cloud Bigtable is Google's Big Data database service.

- A. Relational

- B. mySQL

- C. NoSQL

- D. SQL Server

Answer: C

Explanation:

Cloud Bigtable is Google's NoSQL Big Data database service. It is the same database that Google uses for services, such as Search, Analytics, Maps, and Gmail.

It is used for requirements that are low latency and high throughput including Internet of Things (IoT), user analytics, and financial data analysis.

Reference: https://cloud.google.com/bigtable/

NEW QUESTION 10

You have several Spark jobs that run on a Cloud Dataproc cluster on a schedule. Some of the jobs run in sequence, and some of the jobs run concurrently. You need to automate this process. What should you do?

- A. Create a Cloud Dataproc Workflow Template

- B. Create an initialization action to execute the jobs

- C. Create a Directed Acyclic Graph in Cloud Composer

- D. Create a Bash script that uses the Cloud SDK to create a cluster, execute jobs, and then tear down the cluster

Answer: C

NEW QUESTION 11

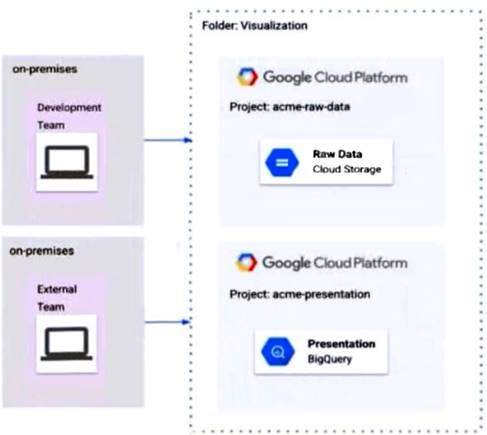

The Development and External teams nave the project viewer Identity and Access Management (1AM) role m a folder named Visualization. You want the Development Team to be able to read data from both Cloud Storage and BigQuery, but the External Team should only be able to read data from BigQuery. What should you do?

- A. Remove Cloud Storage IAM permissions to the External Team on the acme-raw-data project

- B. Create Virtual Private Cloud (VPC) firewall rules on the acme-raw-data protect that deny all Ingress traffic from the External Team CIDR range

- C. Create a VPC Service Controls perimeter containing both protects and BigQuery as a restricted API Add the External Team users to the perimeter s Access Level

- D. Create a VPC Service Controls perimeter containing both protects and Cloud Storage as a restricted AP

- E. Add the Development Team users to the perimeter's Access Level

Answer: C

NEW QUESTION 12

Your startup has never implemented a formal security policy. Currently, everyone in the company has access to the datasets stored in Google BigQuery. Teams have freedom to use the service as they see fit, and they have not documented their use cases. You have been asked to secure the data warehouse. You need to discover what everyone is doing. What should you do first?

- A. Use Google Stackdriver Audit Logs to review data access.

- B. Get the identity and access management IIAM) policy of each table

- C. Use Stackdriver Monitoring to see the usage of BigQuery query slots.

- D. Use the Google Cloud Billing API to see what account the warehouse is being billed to.

Answer: A

NEW QUESTION 13

You operate a logistics company, and you want to improve event delivery reliability for vehicle-based sensors. You operate small data centers around the world to capture these events, but leased lines that provide connectivity from your event collection infrastructure to your event processing infrastructure are unreliable, with unpredictable latency. You want to address this issue in the most cost-effective way. What should you do?

- A. Deploy small Kafka clusters in your data centers to buffer events.

- B. Have the data acquisition devices publish data to Cloud Pub/Sub.

- C. Establish a Cloud Interconnect between all remote data centers and Google.

- D. Write a Cloud Dataflow pipeline that aggregates all data in session windows.

Answer: B

NEW QUESTION 14

You work for a manufacturing company that sources up to 750 different components, each from a different supplier. You’ve collected a labeled dataset that has on average 1000 examples for each unique component. Your team wants to implement an app to help warehouse workers recognize incoming components based on a photo of the component. You want to implement the first working version of this app (as Proof-Of-Concept) within a few working days. What should you do?

- A. Use Cloud Vision AutoML with the existing dataset.

- B. Use Cloud Vision AutoML, but reduce your dataset twice.

- C. Use Cloud Vision API by providing custom labels as recognition hints.

- D. Train your own image recognition model leveraging transfer learning techniques.

Answer: A

NEW QUESTION 15

You are migrating your data warehouse to BigQuery. You have migrated all of your data into tables in a dataset. Multiple users from your organization will be using the data. They should only see certain tables based on their team membership. How should you set user permissions?

- A. Assign the users/groups data viewer access at the table level for each table

- B. Create SQL views for each team in the same dataset in which the data resides, and assign the users/groups data viewer access to the SQL views

- C. Create authorized views for each team in the same dataset in which the data resides, and assign the users/groups data viewer access to the authorized views

- D. Create authorized views for each team in datasets created for each tea

- E. Assign the authorized views data viewer access to the dataset in which the data reside

- F. Assign the users/groups data viewer access to the datasets in which the authorized views reside

Answer: A

NEW QUESTION 16

Your company is in a highly regulated industry. One of your requirements is to ensure individual users have access only to the minimum amount of information required to do their jobs. You want to enforce this requirement with Google BigQuery. Which three approaches can you take? (Choose three.)

- A. Disable writes to certain tables.

- B. Restrict access to tables by role.

- C. Ensure that the data is encrypted at all times.

- D. Restrict BigQuery API access to approved users.

- E. Segregate data across multiple tables or databases.

- F. Use Google Stackdriver Audit Logging to determine policy violations.

Answer: BDF

NEW QUESTION 17

Which action can a Cloud Dataproc Viewer perform?

- A. Submit a job.

- B. Create a cluster.

- C. Delete a cluster.

- D. List the jobs.

Answer: D

Explanation:

A Cloud Dataproc Viewer is limited in its actions based on its role. A viewer can only list clusters, get cluster details, list jobs, get job details, list operations, and get operation details.

Reference: https://cloud.google.com/dataproc/docs/concepts/iam#iam_roles_and_cloud_dataproc_operations_summary

NEW QUESTION 18

Your company uses a proprietary system to send inventory data every 6 hours to a data ingestion service in the cloud. Transmitted data includes a payload of several fields and the timestamp of the transmission. If there are any concerns about a transmission, the system re-transmits the data. How should you deduplicate the data most efficiency?

- A. Assign global unique identifiers (GUID) to each data entry.

- B. Compute the hash value of each data entry, and compare it with all historical data.

- C. Store each data entry as the primary key in a separate database and apply an index.

- D. Maintain a database table to store the hash value and other metadata for each data entry.

Answer: D

NEW QUESTION 19

You are building a new data pipeline to share data between two different types of applications: jobs generators and job runners. Your solution must scale to accommodate increases in usage and must accommodate the addition of new applications without negatively affecting the performance of existing ones. What should you do?

- A. Create an API using App Engine to receive and send messages to the applications

- B. Use a Cloud Pub/Sub topic to publish jobs, and use subscriptions to execute them

- C. Create a table on Cloud SQL, and insert and delete rows with the job information

- D. Create a table on Cloud Spanner, and insert and delete rows with the job information

Answer: A

NEW QUESTION 20

......

P.S. 2passeasy now are offering 100% pass ensure Professional-Data-Engineer dumps! All Professional-Data-Engineer exam questions have been updated with correct answers: https://www.2passeasy.com/dumps/Professional-Data-Engineer/ (370 New Questions)