Our pass rate is high to 98.9% and the similarity percentage between our DP-600 study guide and real exam is 90% based on our seven-year educating experience. Do you want achievements in the Microsoft DP-600 exam in just one try? I am currently studying for the Microsoft DP-600 exam. Latest Microsoft DP-600 Test exam practice questions and answers, Try Microsoft DP-600 Brain Dumps First.

Also have DP-600 free dumps questions for you:

NEW QUESTION 1

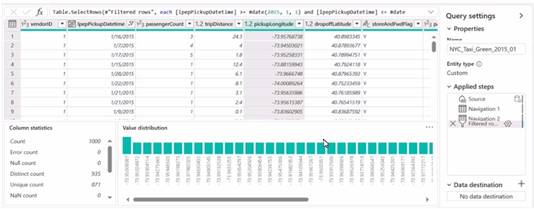

You have a Fabric workspace named Workspace 1 that contains a dataflow named Dataflow1. Dataflow! has a query that returns 2.000 rows. You view the query in Power Query as shown in the following exhibit.

What can you identify about the pickupLongitude column?

- A. The column has duplicate values.

- B. All the table rows are profiled.

- C. The column has missing values.

- D. There are 935 values that occur only once.

Answer: B

Explanation:

The pickupLongitude column has duplicate values. This can be inferred because the 'Distinct count' is 935 while the 'Count' is 1000, indicating that there are repeated values within the column. References = Microsoft Power BI documentation on data profiling could provide further insights into understanding and interpreting column statistics like these.

NEW QUESTION 2

HOTSPOT

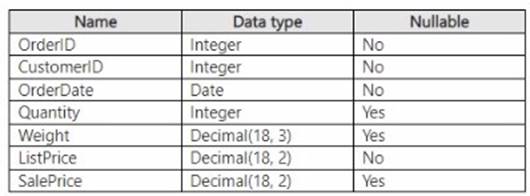

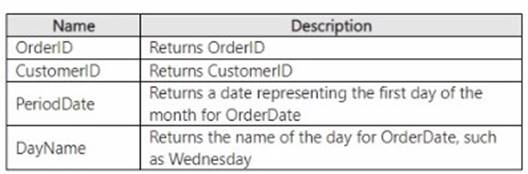

You have a Fabric warehouse that contains a table named Sales.Orders. Sales.Orders

contains the following columns.

You need to write a T-SQL query that will return the following columns.

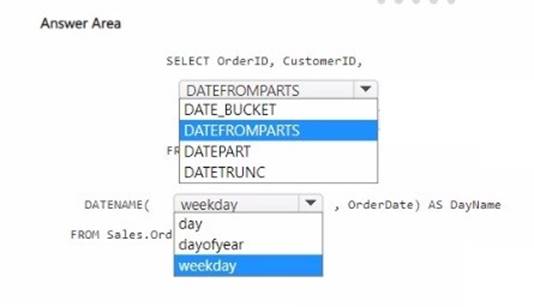

How should you complete the code? To answer, select the appropriate options in the answer area.

NOTE: Each correct selection is worth one point.

Solution:

For the PeriodDate that returns the first day of the month for OrderDate, you should use DATEFROMPARTS as it allows you to construct a date from its individual components (year, month, day).

For the DayName that returns the name of the day for OrderDate, you should use

DATENAME with the weekday date part to get the full name of the weekday. The complete SQL query should look like this:

SELECT OrderID, CustomerID,

DATEFROMPARTS(YEAR(OrderDate), MONTH(OrderDate), 1) AS PeriodDate, DATENAME(weekday, OrderDate) AS DayName

FROM Sales.Orders

Select DATEFROMPARTS for the PeriodDate and weekday for the DayName in the answer area.

Does this meet the goal?

- A. Yes

- B. Not Mastered

Answer: A

NEW QUESTION 3

You have a Fabric tenant that contains a warehouse.

You use a dataflow to load a new dataset from OneLake to the warehouse.

You need to add a Power Query step to identify the maximum values for the numeric columns.

Which function should you include in the step?

- A. Tabl

- B. MaxN

- C. Table.Max

- D. Table.Range

- E. Table.Profile

Answer: B

Explanation:

The Table.Max function should be used in a Power Query step to identify the maximum values for the numeric columns. This function is designed to calculate the maximum value across each column in a table, which suits the requirement of finding maximum values for numeric columns. References = For detailed information on Power Query functions, including Table.Max, please refer to Power Query M function reference.

NEW QUESTION 4

You have a Fabric tenant that contains a warehouse.

You are designing a star schema model that will contain a customer dimension. The customer dimension table will be a Type 2 slowly changing dimension (SCD).

You need to recommend which columns to add to the table. The columns must NOT already exist in the source.

Which three types of columns should you recommend? Each correct answer presents part of the solution.

NOTE: Each correct answer is worth one point.

- A. an effective end date and time

- B. a foreign key

- C. a surrogate key

- D. a natural key

- E. an effective start date and time

Answer: ACE

Explanation:

For a Type 2 slowly changing dimension (SCD), you typically need to add the following types of columns that do not exist in the source system:

✑ An effective start date and time (E): This column records the date and time from which the data in the row is effective.

✑ An effective end date and time (A): This column indicates until when the data in the row was effective. It allows you to keep historical records for changes over time.

✑ A surrogate key (C): A surrogate key is a unique identifier for each row in a table, which is necessary for Type 2 SCDs to differentiate between historical and current records.

References: Best practices for designing slowly changing dimensions in data warehousing solutions, which include Type 2 SCDs, are commonly discussed in data warehousing and business intelligence literature and would be part of the modeling guidance in a Fabric tenant's documentation.

NEW QUESTION 5

You have a Fabric tenant that contains a new semantic model in OneLake. You use a Fabric notebook to read the data into a Spark DataFrame.

You need to evaluate the data to calculate the min, max, mean, and standard deviation values for all the string and numeric columns.

Solution: You use the following PySpark expression: df.show()

Does this meet the goal?

- A. Yes

- B. No

Answer: B

Explanation:

The df.show() method also does not meet the goal. It is used to show the contents of the DataFrame, not to compute statistical functions. References = The usage of the show() function is documented in the PySpark API documentation.

NEW QUESTION 6

You have a Fabric tenant that contains a warehouse.

Several times a day. the performance of all warehouse queries degrades. You suspect that Fabric is throttling the compute used by the warehouse.

What should you use to identify whether throttling is occurring?

- A. the Capacity settings

- B. the Monitoring hub

- C. dynamic management views (DMVs)

- D. the Microsoft Fabric Capacity Metrics app

Answer: B

Explanation:

To identify whether throttling is occurring, you should use the Monitoring hub (B). This provides a centralized place where you can monitor and manage the health, performance, and reliability of your data estate, and see if the compute resources are being throttled. References = The use of the Monitoring hub for performance management and troubleshooting is detailed in the Azure Synapse Analytics documentation.

NEW QUESTION 7

You have a Fabric tenant that contains a lakehouse named lakehouse1. Lakehouse1 contains a table named Table1.

You are creating a new data pipeline.

You plan to copy external data to Table1. The schema of the external data changes regularly.

You need the copy operation to meet the following requirements:

• Replace Table1 with the schema of the external data.

• Replace all the data in Table1 with the rows in the external data.

You add a Copy data activity to the pipeline. What should you do for the Copy data activity?

- A. From the Source tab, add additional columns.

- B. From the Destination tab, set Table action to Overwrite.

- C. From the Settings tab, select Enable staging

- D. From the Source tab, select Enable partition discovery

- E. From the Source tab, select Recursively

Answer: B

Explanation:

For the Copy data activity, from the Destination tab, setting Table action to Overwrite (B) will ensure that Table1 is replaced with the schema and rows of the external data, meeting the requirements of replacing both the schema and data of the destination table. References = Information about Copy data activity and table actions in Azure Data Factory, which can be applied to data pipelines in Fabric, is available in the Azure Data Factory documentation.

NEW QUESTION 8

You have a Fabric tenant that contains a data pipeline.

You need to ensure that the pipeline runs every four hours on Mondays and Fridays. To what should you set Repeat for the schedule?

- A. Daily

- B. By the minute

- C. Weekly

- D. Hourly

Answer: C

Explanation:

You should set Repeat for the schedule to Weekly (C). This allows you to specify the pipeline to run on specific days of the week, in this case, every four hours on Mondays and Fridays. References = Scheduling options for data pipelines are available in the Azure Data Factory documentation, which includes details on configuring recurring triggers.

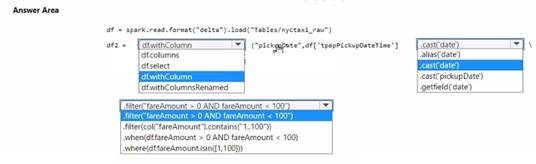

NEW QUESTION 9

HOTSPOT

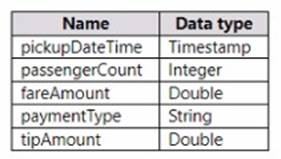

You have a Fabric tenant that contains a lakehouse named Lakehouse1. Lakehouse1 contains a table named Nyctaxi_raw. Nyctaxi_raw contains the following columns.

You create a Fabric notebook and attach it to lakehouse1.

You need to use PySpark code to transform the data. The solution must meet the following requirements:

• Add a column named pickupDate that will contain only the date portion of pickupDateTime.

• Filter the DataFrame to include only rows where fareAmount is a positive number that is less than 100.

How should you complete the code? To answer, select the appropriate options in the answer area. NOTE: Each correct selection is worth one point.

Solution:

✑ Add the pickupDate column: .withColumn("pickupDate", df["pickupDateTime"].cast("date"))

✑ Filter the DataFrame: .filter("fareAmount > 0 AND fareAmount < 100")

In PySpark, you can add a new column to a DataFrame using the .withColumn method, where the first argument is the new column name and the second argument is the expression to generate the content of the new column. Here, we use the .cast("date") function to extract only the date part from a timestamp. To filter the DataFrame, you use the .filter method with a condition that selects rows where fareAmount is greater than 0 and less than 100, thus ensuring only positive values less than 100 are included.

Does this meet the goal?

- A. Yes

- B. Not Mastered

Answer: A

NEW QUESTION 10

You need to recommend a solution to prepare the tenant for the PoC.

Which two actions should you recommend performing from the Fabric Admin portal? Each correct answer presents part of the solution.

NOTE: Each correct answer is worth one point.

- A. Enable the Users can try Microsoft Fabric paid features option for specific security groups.

- B. Enable the Allow Azure Active Directory guest users to access Microsoft Fabric option for specific security groups.

- C. Enable the Users can create Fabric items option and exclude specific security groups.

- D. Enable the Users can try Microsoft Fabric paid features option for the entire organization.

- E. Enable the Users can create Fabric items option for specific security groups.

Answer: AE

Explanation:

The PoC is planned to be completed using a Fabric trial capacity, which implies that users involved in the PoC should be able to try paid features. However, this should be limited to specific security groups involved in the PoC to prevent the entire organization from accessing these features before the trial is proven successful (A). The ability for users to create Fabric items should also be enabled for specific security groups to ensure that only the relevant team members participating in the PoC can create items in the Fabric environment (E).

NEW QUESTION 11

You have a Fabric tenant that uses a Microsoft tower Bl Premium capacity. You need to enable scale-out for a semantic model. What should you do first?

- A. At the semantic model level, set Large dataset storage format to Off.

- B. At the tenant level, set Create and use Metrics to Enabled.

- C. At the semantic model level, set Large dataset storage format to On.

- D. At the tenant level, set Data Activator to Enabled.

Answer: C

Explanation:

To enable scale-out for a semantic model, you should first set Large dataset storage format to On (C) at the semantic model level. This configuration is necessary to handle larger datasets effectively in a scaled-out environment. References = Guidance on configuring large dataset storage formats for scale-out is available in the Power BI documentation.

NEW QUESTION 12

You have a Fabric tenant that contains a lakehouse named Lakehouse1. Lakehouse1 contains a subfolder named Subfolder1 that contains CSV files. You need to convert the CSV files into the delta format that has V-Order optimization enabled. What should you do from Lakehouse explorer?

- A. Use the Load to Tables feature.

- B. Create a new shortcut in the Files section.

- C. Create a new shortcut in the Tables section.

- D. Use the Optimize feature.

Answer: D

Explanation:

To convert CSV files into the delta format with Z-Order optimization enabled, you should use the Optimize feature (D) from Lakehouse Explorer. This will allow you to optimize the file organization for the most efficient querying. References = The process for converting and optimizing file formats within a lakehouse is discussed in the lakehouse management documentation.

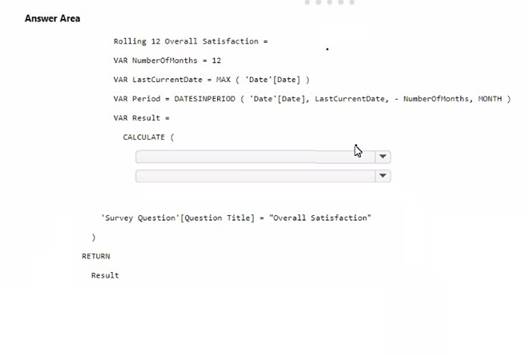

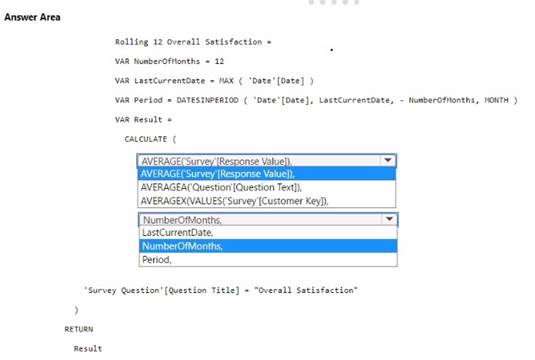

NEW QUESTION 13

HOTSPOT

You need to create a DAX measure to calculate the average overall satisfaction score.

How should you complete the DAX code? To answer, select the appropriate options in the answer area.

NOTE: Each correct selection is worth one point.

Solution:

✑ The measure should use the AVERAGE function to calculate the average value.

✑ It should reference the Response Value column from the 'Survey' table.

✑ The 'Number of months' should be used to define the period for the average calculation.

To calculate the average overall satisfaction score using DAX, you would need to use the AVERAGE function on the response values related to satisfaction questions. The DATESINPERIOD function will help in calculating the rolling average over the last 12 months.

Does this meet the goal?

- A. Yes

- B. Not Mastered

Answer: A

NEW QUESTION 14

You have a Fabric tenant.

You are creating a Fabric Data Factory pipeline.

You have a stored procedure that returns the number of active customers and their average sales for the current month.

You need to add an activity that will execute the stored procedure in a warehouse. The returned values must be available to the downstream activities of the pipeline.

Which type of activity should you add?

- A. Stored procedure

- B. Get metadata

- C. Lookup

- D. Copy data

Answer: C

Explanation:

In a Fabric Data Factory pipeline, to execute a stored procedure and make the returned values available for downstream activities, the Lookup activity is used. This activity can retrieve a dataset from a data store and pass it on for further processing. Here’s how you would use the Lookup activity in this context:

✑ Add a Lookup activity to your pipeline.

✑ Configure the Lookup activity to use the stored procedure by providing the necessary SQL statement or stored procedure name.

✑ In the settings, specify that the activity should use the stored procedure mode.

✑ Once the stored procedure executes, the Lookup activity will capture the results and make them available in the pipeline’s memory.

✑ Downstream activities can then reference the output of the Lookup activity. References: The functionality and use of Lookup activity within Azure Data Factory is documented in Microsoft’s official documentation for Azure Data Factory, under the section

for pipeline activities.

NEW QUESTION 15

You have a Fabric tenant that contains a machine learning model registered in a Fabric workspace. You need to use the model to generate predictions by using the predict function in a fabric notebook. Which two languages can you use to perform model scoring? Each correct answer presents a complete solution. NOTE: Each correct answer is worth one point.

- A. T-SQL

- B. DAX EC.

- C. Spark SQL

- D. PySpark

Answer: CD

Explanation:

The two languages you can use to perform model scoring in a Fabric notebook using the predict function are Spark SQL (option C) and PySpark (option D). These are both part of the Apache Spark ecosystem and are supported for machine learning tasks in a Fabric environment. References = You can find more information about model scoring and supported languages in the context of Fabric notebooks in the official documentation on Azure Synapse Analytics.

NEW QUESTION 16

......

Thanks for reading the newest DP-600 exam dumps! We recommend you to try the PREMIUM 2passeasy DP-600 dumps in VCE and PDF here: https://www.2passeasy.com/dumps/DP-600/ (101 Q&As Dumps)